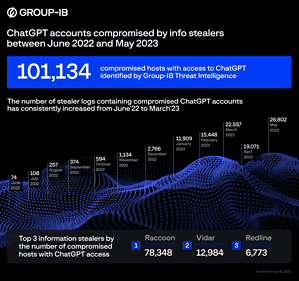

Over 100.000 ChatGPT accounts were hacked and sold on dark web marketplaces in a year. This gives cybercriminals the opportunity to use the AI for their purposes and at the same time steal the data stored in the chat history - sometimes very sensitive company secrets.

Group-IB experts have identified 101.134 info-stealers-infected devices with stored ChatGPT credentials. Group-IB's threat intelligence platform found these compromised credentials in the logs of information-stealing malware traded on illegal dark web marketplaces over the past year. The number of available logs showing compromised ChatGPT accounts peaked at over 2023 in May 26.800.

ChatGPT: Brisk trade in access data

🔎 Group-IB alone registered over 100.000 stolen ChatGPT access data within one year, which were then offered on the Darknet (Image: Group-IB).

Group-IB experts emphasize that more and more employees are using the chatbot to streamline their work, be it in software development or business communication. By default, ChatGPT saves the history of user requests and AI responses. Consequently, unauthorized access to ChatGPT accounts can expose confidential or sensitive information that can be exploited in targeted attacks against companies and their employees. According to Group-IB's latest findings, ChatGPT accounts are already gaining popularity in underground communities.

Group-IB's threat intelligence platform stores the industry's largest library of dark web data, monitors cybercriminal forums, marketplaces and closed communities in real-time to detect compromised credentials, stolen credit cards, new malware samples, corporate network access and identify other important information.

Comment from ESET specialist

“Many ChatGPT users are unaware that their accounts contain a large amount of sensitive information and are of great interest to cybercriminals. All prompts are saved by default. The more information chatbots get, the more attractive they become to hackers. It is therefore advisable to be careful about what information they enter into cloud-based chatbots and other services. This confidential data can be used for targeted attacks on companies and their employees.

In addition, so-called info-stealers are increasingly used in ChatGPT attacks and even in malware-as-a-service attacks. Info stealers focus on stealing confidential data stored on a compromised system. They look for important information such as cryptocurrency wallet records, credentials and passwords, and saved browser logins.

The fact that a regular user with free access does not have the option to activate two-factor or multi-factor authentication makes the service extremely vulnerable. It is therefore recommended to disable the chat save feature unless it is absolutely necessary. Additionally, we recommend using a single sign-on option that also uses 2FA,” said Jake Moore, Global Security Advisor at ESET.

More at Group-IB.com