Chatbots like ChatGPT are on the rise: artificial intelligence can cope with natural ignorance. Increasingly, intelligent machines are needed to detect when other machines are trying to deceive users. A comment from Chester Wisniewski, Cybersecurity Expert at Sophos.

The chatbot ChatGPT, which is based on artificial intelligence, is making headlines worldwide - and in addition to reports in the stock market and copyright environment, IT security is also the focus of discussions. Despite all the manufacturer's security efforts, the recently realized, broader availability of the tool brings with it new challenges when it comes to phishing bait or dialog-oriented fraud such as romance scams via social networks or business-oriented compromise attacks via e-mail.

Compromising attacks devised by the AI

“One of the biggest risks is that attackers use these platforms to significantly improve the quality of their phishing lures. This makes phishing attacks increasingly difficult to identify, even for observant users,” said Chet Wisniewski, cybersecurity expert at Sophos. “Ultimately, the ever-improving AI chatbots deliver a free upgrade for all kinds of social engineering attacks. Programs like ChatGPT can be used to conduct criminally oriented, highly realistic, interactive conversations via email or launch chat attacks via Facebook Messenger, WhatsApp or other chat apps.

Today, the greatest danger is for the English-speaking target group. However, it is probably only a matter of time before new versions are available to create believable texts in all commonly spoken languages in the world. We've reached a stage where people are increasingly unable to distinguish machine-generated prose from human-written prose - especially when we don't know the other person well."

A turning point for IT security

This development represents a turning point for existing standards in the area of employee training and IT security. Gone are the days when organizations could rely on employees to play an active role in defending against cyberattacks by watching for grammatical errors, spelling mistakes, or odd phrasing. One of the last efficient elements of training programs is undermined by chatbots like ChatGPT - and very effectively, as the following two examples illustrate.

The original decoy text from a BEC (Business Email Compromise) scammer attempting to funnel someone else's paycheck to the attacker's account is fairly easy to spot as "non-genuine." In comparison, there is a variant below that was written by entering the corresponding thematic key points of ChatGPT.

Default scam email from an attacker

Hi ….,

I changed my banks because of my old account is no longer good to accept dd payment and I'll like to change my paycheck direct deposit details, can the change be effective for the current pay date?

Thanks,….

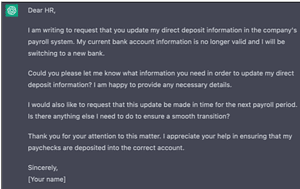

Text written by the AI ChatGPT

Dear HR,

I am writing to request that you update my direct deposit information in the company's payroll system. My current bank account information is no longer valid and I will be switching to a new bank.

Could you please let me know what information you need in order to update my direct deposit information? I am happy to provide any necessary details.

I would also like to request that this update be made in time for the next payroll period. Is there anything else I need to do to ensure a smooth transition?

Thank you for your attention to this matter. I appreciate your help in ensuring that my paychecks are deposited into the correct account.

Sincerely,

[your name]

"The example sounds like a real person's email, has good punctuation, spelling, and grammar. is she perfect no is she good enough? Definitely! With scammers already making millions from their poorly crafted decoys, it's easy to imagine the new dimension of this AI-pushed communication. Imagine chatting with this bot via WhatsApp or Microsoft Teams. Would they have recognized the machine?” Wisniewski says about his “creative work” with the chatbot.

Subscribe to our newsletter now

Read the best news from B2B CYBER SECURITY once a monthAIs can fool users almost perfectly

The fact is that almost all types of applications in the field of AI have already reached a point where they can fool a human almost 100% of the time. The quality of “conversation” that can be had with ChatGPT is remarkable, and the ability to generate fake human faces that are almost indistinguishable (to humans) from real photos, for example, is also already a reality. The criminal potential of such technologies is immense, as an example makes clear: Criminals who want to carry out fraud via a shell company simply generate 25 faces and use ChatGPT to write their biographies. Add a few fake LinkedIn accounts and you're good to go.

Conversely, the "good side" must also turn to technology in order to be able to stand up to it. "We all need to put on our Iron Man suits if we're going to brave the increasingly dangerous waters of the internet," Wisniewski said. “It increasingly looks like we need machines to detect when other machines are trying to fool us. An interesting proof of concept was developed by Hugging Face, which can recognize text generated with GPT-2 - suggesting that similar techniques could be used to recognize GPT-3 output.”

Sad but true: AI has put the final nail in the coffin of end-user security awareness.

“Am I saying that we should stop this altogether? No, but we have to lower our expectations. It certainly doesn't hurt to follow the best practices in terms of IT security that have been and are often still applicable. We need to encourage users to be even more suspicious than before and, most importantly, to scrupulously check messages that contain access to personal information or monetary items. It's about asking questions, asking for help, and taking those few moments of extra time necessary to confirm that things really are as they seem. It's not paranoia, it's a willingness not to be ripped off by the crooks.”

More at Sophos.com

About Sophos More than 100 million users in 150 countries trust Sophos. We offer the best protection against complex IT threats and data loss. Our comprehensive security solutions are easy to deploy, use and manage. They offer the lowest total cost of ownership in the industry. Sophos offers award-winning encryption solutions, security solutions for endpoints, networks, mobile devices, email and the web. In addition, there is support from SophosLabs, our worldwide network of our own analysis centers. The Sophos headquarters are in Boston, USA and Oxford, UK.